By Piers Smith

It is an understatement to say that 2020 has been a challenging year for those in healthcare. COVID-19 has overwhelmed healthcare workers across the nation and changed the way healthcare is provided and received.

The coronavirus has caused many healthcare providers to adopt a form of conversational AI, the most common being chatbots, to assist patients as front-line workers become burdened with other tasks. While they are not meant to diagnose individuals, text-based chatbots provide patients with a contact-free solution where they can ask questions, fill out forms, and symptom screen from the comfort of their own home.

However, a study done by the Journal of Consumer Research, indicates that people don’t fully trust text-based AI. While it is human nature to view oneself as unique and one-of-a-kind, the same pertains to the way we view our symptoms.

The research showed that individuals do not believe programmed algorithms within these chatbots can accurately detect their symptoms because they think only a human doctor can understand how they feel.

Text-based conversational AI is clearly lacking when it comes to making patients feel understood. While these forms of conversational AI can answer questions, fill out forms, and give helpful advice without having patients step foot outside, people can’t seem to get over the fact that at the end of the day, they are talking to a robot.

Enter digital humans.

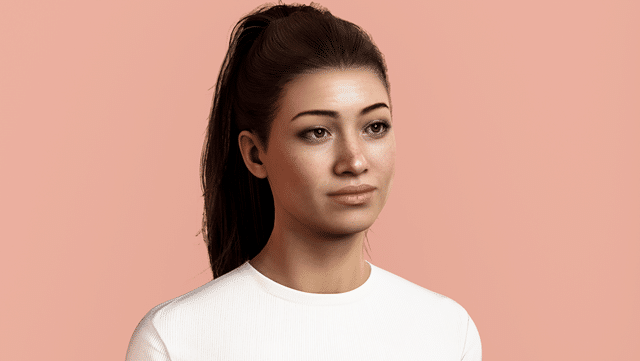

Digital humans are a form of conversational AI that look, interact, and act like real humans. Available 24-hours a day, seven days a week, and equipped with the ability to speak over 40 languages, digital humans can be used anywhere, by anyone, at any time.

Digital humans are a form of conversational AI that look, interact, and act like real humans. Available 24-hours a day, seven days a week, and equipped with the ability to speak over 40 languages, digital humans can be used anywhere, by anyone, at any time.

By having patients interact with a hyper-realistic digital avatar that has a personality, facial expressions, and voice inflections, patients are put at ease and are more likely to trust a digital human in comparison to a text-based chatbot because they add empathy to the equation.

Take a study conducted by the University of Southern California, for example. Researchers wanted to see how returning soldiers’ disclosed information about post-traumatic stress disorder symptoms to AI.

In the first phase of the trial, the veterans were given the option of interacting with a faceless AI system or a person. The results showed that the veterans who chose the faceless system were more likely to disclose more details about their symptoms than their counterparts who talked to a human, since they felt like they weren’t being judged.

In the second phase of the study, researchers named the faceless system and added a computer-generated face and body creating Ellie, the digital human. With Ellie, they found veterans were twice as likely to report PTSD symptoms to Ellie than to the faceless system because they established a connection with her, which in turn, allowed them to feel comfortable enough to disclose more.

This study indicates adding a face and other human characteristics to existing AI technology can make people more trusting and can even encourage them to be more transparent than they would be with a real human. People need compassion and empathy when it comes to discussing problems with their mental and physical health and digital humans can provide that, while still offering a judgment-free exchange.

While these digital humans can’t diagnose individuals, they can recommend patients to see a doctor after screening their symptoms. This frees up time for frontline healthcare workers and reduces the risk of illness spread.

My colleagues and I developed a digital human named Sophie, that can pre-screen individuals for symptoms of COVID-19. While Sophie did not attempt to diagnose patients with COVID-19, she was programmed to identify a baseline of risk factors and recommend the next steps for the individual.

Along with conducting remote triage, digital humans can assist patients with managing major life events, keep track of medication, support the check-out process from hospitals, and aid rehabilitation and mental health efforts. It is impossible for healthcare professionals to keep track of every patient that has been discharged and continuously check up on them to ensure they are doing their required aftercare. However, digital humans can engage these patients in their homes and more importantly, keep them engaged in the post-care process through building rapport with them.

While traditional, text-based chatbot may get the job done, they are not building meaningful connections with patients and getting them to follow up. By adding a name, face, and personality to a chatbot, providers will start to see a shift in the number of patients engaging with their conversational AI, as well as an increase in disclosure from patients.

Piers Smith is a Healthcare AI Architect at UneeQ.